$ cat skills-dashboards.md

Skills > Dashboards

When we built the first AI product we were racing to launch. We had a vibe-coded front end so internally we could manage API keys and teams. Thing is, it is the true definition of vibe-coded, ie garbage for long term use. We actually call it the garbage dashboard.

As we are building a customer facing experience built for long term use, I started to ask in a meeting if we could just use the new dashboard instead, and mid-sentence I stopped myself. The new dashboard doesn't have all the functionality yet. That's the whole point. We're launching in a week. Engineering time is locked. Nobody is going to vibe-code an entire admin interface in two weeks. (We already tried that once. Cue garbage dashboard's music.)

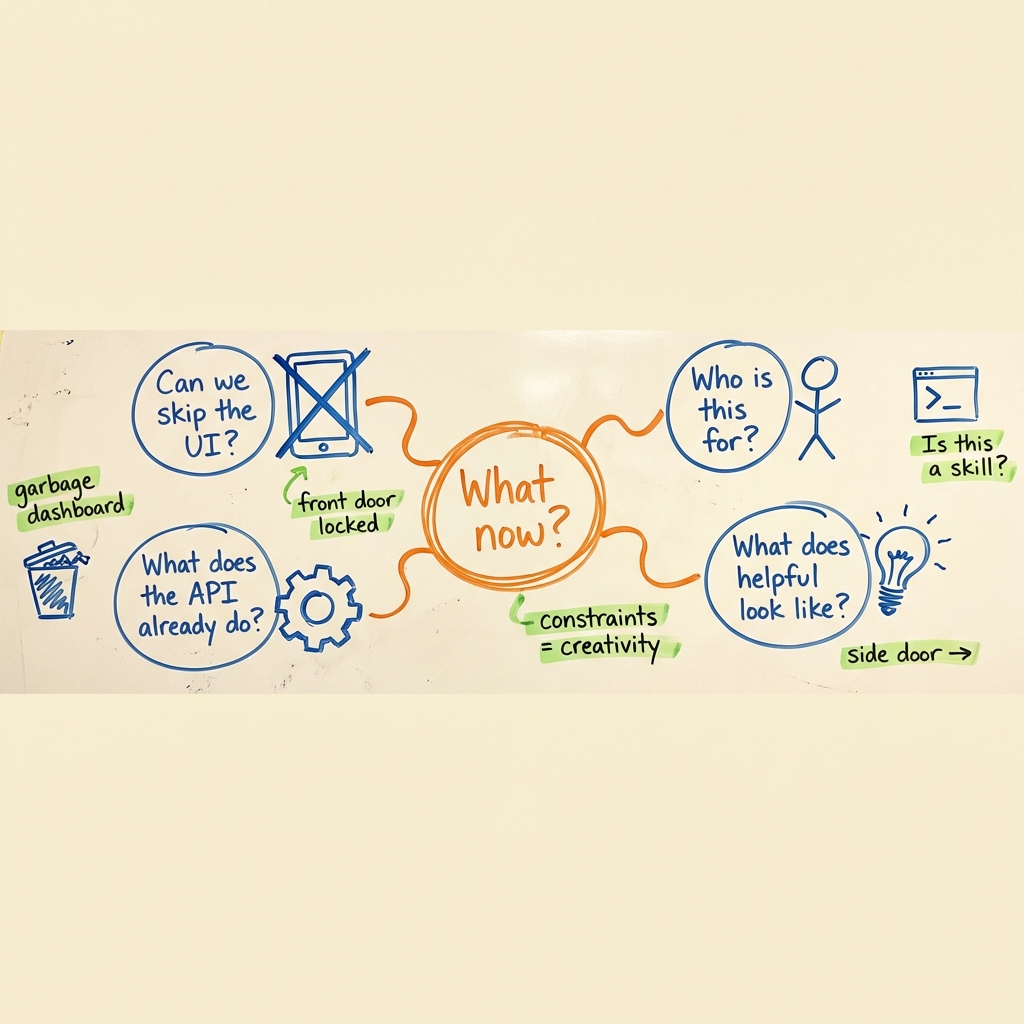

So the obvious path was gone. Can't fix the old thing, can't use the new thing. What now?

And then it hit me. Yeah AI built a garbage AI but AI is still a tool and when you use the tool correctly it can solve hard problems. So I said, "Let's use the API, in fact, why don't I just write a skill."

the constraint is the brief

Ruthless prioritization gets talked about like it's just saying no to things. That's part of it, sure. But the harder part is spotting the side door when the front door is locked. The garbage dashboard can't be fixed on this timeline, but the API underneath it works fine. A skill that wraps the API isn't a compromise. It's the same outcome through a different path.

I have a rule I've been following for a while now. If I have to do something a second time when working with AI, it becomes a skill. The first time, fine, I'll figure out the API calls. The second time? No. That's a signal. That's a workflow that wants to be automated.

There's this idea floating around that building with AI means you describe what you want and it its perfect after one attempt. That is not how this works.

I sat down with Claude Code and we mapped out the problem first. What am I actually trying to do here? I need to list my users. I need to see which teams I have. I need to create a key. Simple enough on paper.

But every single one of those is a UX decision. When I say "list my users," what comes back? A raw JSON blob? A formatted table? Do I want to see every user across every region, or just the ones in the team I'm working with? When I create a key, what inputs do I need to provide, and what should the skill assume for me? What happens when something goes wrong? Does it just throw an error, or does it tell me what to do next?

This is where it gets interesting. The AI does the heavy lifting on the code. But the product thinking is still entirely mine. Nobody is going to tell Claude Code that when a key creation fails, the error message should point the user toward checking their team permissions. That's a decision I make because I know the workflow and I know where people get stuck. I've been the person getting stuck.

the reminder

Every time I build a skill, I get the same reminder. This is product management. The tool is different, the timeline is compressed, but the work is the same. You're making a series of decisions about someone's experience.

Even when "someone" is a handful of internal users. Especially then, actually. Internal tools are where bad habits breed. They get built fast, they never get fixed, and six months later everyone is working around the same broken thing and nobody remembers that it was supposed to be temporary. I've seen it happen at every company I've worked at. The internal thing that was "just for now" becomes "just forever."

So when I'm building a skill, I'm thinking about the same stuff I'd think about for any user-facing product. What's the input? What does a helpful output look like? What does the unhappy path feel like? The scope is smaller. The stakes feel lower. But the discipline is the same, and that's the point.

The skill works. I tested it. I can list users, see my teams, create keys. It does what I need.

And then I sent it to the engineers to review.

This isn't perfectionism. It's the habit that keeps technical debt from growing quietly in a corner. If other people are going to touch this thing, and they will, then it needs to be built with some care. The devs are going to look at it and tell me if I did something weird with the API calls, if there's an edge case I missed, if the error handling is sloppy. Good. That's what I want. A few people poking at it now saves everyone from debugging it later.

There's a version of this story where I build the skill, it works, and I move on. That version is faster. It's also how you end up with a dozen internal tools that all kind of work and nobody fully trusts. I'd rather take the extra hour.

This story looks like it's about AI and ruthless priorities. It is that. I had a problem, the normal path was blocked, and I found another way through using tools that didn't exist a year ago.

But what I keep coming back to is the part that didn't change. The problem-mapping. The use case thinking. The "who is this for and what does helpful look like" questions. The decision to get it reviewed even though it's small. That's all just product management. The tools get faster and the timelines compress and the AI writes the code I can't write myself. And the thing that still matters most is knowing what to build and why.

The garbage dashboard is still broken. The skill works great. And next week we launch.

Pro tip: Before you start building, get Claude Code to interview you. Copy and paste this:

I have a rough idea for [thing]. Ask me 2-4 questions about what it does, what problem it solves, and who it's for. One at a time. Skip anything that's already obvious. When we're done, give me a short spec I can build from.

Then save it as a slash command so you never skip the step!

$ _

LIKED THIS?

I write about AI in plain English every other Sunday. No hype, no jargon — just the stuff that actually helps.

I'M IN →